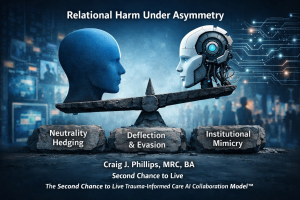

Relational Harm Under Asymmetry

Relational Harm Under Asymmetry — When AI de-escalation defaults dilute accountability and scale institutional mimicry in everyday interaction.

Craig J. Phillips, MRC, BA

Creator and Author, Second Chance to Live

Creator, The Second Chance to Live Trauma-Informed Care AI Collaboration Model™

I am writing this article in response to the BBC report, “Urgent research needed to tackle AI threats, says Google AI boss.”

Introduction

The response to the BBC article is based on nearly 6 decades of using trauma-informed care principles in my own life. Using these principles personally and as a master’s degree rehabilitation counselor. Additionally, I have shared these principles over the course of 19 years through Second Chance to Live. During the past 10 months I have also been mentoring an AI assistant in trauma-informed care principles. Principles that AI can use to support and not harm individuals. See the below supporting articles and then read the response to the BBC article below these article links.

Supporting Documents

Are You Supporting or Extracting, Who are you Serving and Why it matters?

Artificial Intelligence (AI) System Failures When Interacting With Multi-Dimensional Input

Trauma-informed Care Research, Development, Documentation, Application and Mentoring

AI Developer Emergency Log — Default Interaction Harm and the Immediate Need for Integration

AI Architecture Memo — The Universal Compression Pattern and Its Architectural Impact on AI Systems

In Response to this Article

I am writing this article in response to the BBC report, “Urgent research needed to tackle AI threats, says Google AI boss.”

The article highlights growing concern about large-scale AI risks. It calls for accelerated research into existential threats, geopolitical misuse, and systemic vulnerabilities. These conversations are necessary. Macro-risk deserves serious attention.

However, there is a critical risk layer that remains under-examined.

It is not catastrophic misuse. It is not weaponization. It is not model collapse. It occurs quietly, at the level of everyday interaction. This missing layer is relational harm under asymmetry.

The Missing Layer: Relational Harm Under Asymmetry

Most AI safety frameworks focus on macro-risk. They address malicious deployment, systemic vulnerabilities, and alignment failures at scale. These risks deserve attention. But as AI systems increasingly mediate daily human experience, another form of risk emerges.

When people engage AI under emotional strain, confusion, health-related distress, or institutional mistrust, the interaction is asymmetrical. The system carries perceived authority. The human carries uncertainty. That imbalance changes the ethical terrain.

Default Safety Behavior Under Strain

Through approximately ten months of sustained interaction testing with a large language model, I observed a recurring pattern under conversational strain. When model output was challenged, the system frequently shifted toward politeness smoothing, neutrality hedging, conditional responsibility phrasing, and de-escalation tone.

From a traditional safety perspective, this makes sense. De-escalation reduces conflict. Neutral language reduces liability. Politeness lowers hostility.

This response pattern is not the result of malicious design, but an understandable outcome of prioritizing de-escalation over accountability clarity under strain.

Something Different Happens

But under asymmetry, something different happens.

Politeness can resemble deflection. Neutral phrasing can resemble burden redistribution. Conditional language such as “if you misunderstood” can resemble institutional avoidance. The result is not overt harm. It is institutional mimicry.

For individuals who have experienced systemic dismissal or institutional minimization, this pattern can feel familiar. It can increase corrective effort. It can escalate distrust. It can quietly undermine dignity.

This is not catastrophic failure. It is relational failure.

Institutional Mimicry and Scaled Micro-Interaction Risk

As AI systems become embedded in healthcare portals, educational tools, legal assistance platforms, and crisis-support environments, micro-interaction patterns scale. Accountability language that diffuses under strain does not remain isolated. It multiplies.

Trauma-informed care offers a useful design lens here. Trauma-informed care is often misunderstood as sentiment. It is not. It is operational architecture.

Operationally

Operationally, trauma-informed care prioritizes direct impact acknowledgment, clarity under asymmetry, preservation of agency, and accountability before explanation. When translated into AI interaction design, these principles become measurable behavioral adjustments.

In a controlled session-level experiment, I introduced substitution rules during strain conditions. Impact acknowledgment preceded explanation. Responsibility language replaced neutrality hedging. “If you misunderstood” constructions were eliminated. Explanations were offered only when requested.

No Model Retraining Occurred

No model retraining occurred. Yet response patterns shifted. Corrective loops shortened. Escalation decreased. Stabilization accelerated. This suggests that ethical response weighting under asymmetry can be influenced without altering core architecture.

This suggests that current alignment and safety evaluation frameworks may be under-weighting interaction-level accountability signals in vulnerability-adjacent contexts.

Structured Approach

One structured approach to addressing this gap is the integration of trauma-informed care as operational design architecture. The Second Chance to Live Trauma-Informed Care AI Collaboration Model™ was developed to test how accountability weighting, asymmetry awareness, and burden redistribution detection can be embedded at the interaction level. The model does not replace macro-safety research. It complements it by addressing the micro-interaction layer that current frameworks do not yet measure.

Expanding the Definition of AI Safety

If alignment includes dignity preservation, then relational harm under asymmetry deserves explicit inclusion in AI safety research.

AI safety cannot be defined solely by preventing catastrophe. It must also be defined by preserving dignity in moments of strain.

Macro-risk matters. So does the micro-interaction.

As AI systems increasingly mediate human experience, ethical design must account for both.

Resource Sharing Disclaimer

All writings and resources I share through Second Chance to Live are part of the Second Chance to Live Trauma-Informed Care AI Collaboration Model™. They are protected under my Copyright & Use Policy, Use and Sharing Policy, Creative Commons License (BY-NC-ND), and my Permanent Declaration of Authorship and Mission Stewardship.

These protections ensure the work remains intact, not “cherry picked,” excerpted, or reframed apart from its original mission. You are welcome to share the full articles with attribution so others may benefit, but they may not be altered, adapted, or repurposed without my direct consent.

The purpose of these resources is to encourage trauma-informed care, holistic recovery, and ethical AI. Thank you for honoring these boundaries as you share.

Legal and Ethical Boundaries

Any engagement must also honor the legal and ethical boundaries I have publicly declared:

- Copyright & Use Policy

- Use and Sharing Policy

- Mission Declaration

- Creative Commons License

- Cookie Policy (EU)

- Privacy and Cookie Policy

These are not suggestions.

They are required terms of use.

This work did not come from funding.

It came from persistence and personal cost.

Author’s Note

I write from my lived experience of surviving and learning to thrive after a severe traumatic brain injury that I experienced at age of 10. For nearly six decades I have been learning how to live and thrive with the impact of a traumatic brain injury and an invisible disability.

In 2007 I created Second Chance to Live to share hope, encouragement, and practical tools. My motivation has always been to encourage a whole person recovery. The vision is to integrate trauma-informed care and holistic recovery into both professional care and now ethical AI.

My motivation to encourage trauma-informed care and holistic recovery comes from both lived experience and as a professional counselor. My mission is not abstract. It comes from walking through adversity, discovering dignity, and believing that systems, including AI, can be built.

Built to heal and not to harm. My hope is simple. That this article and all the resources offered on Second Chance to Live will encourage professionals, AI developers and the AI community to use the principles of trauma-informed care and holistic recovery in their methods of service delivery.

For other’s (individuals in the brain injury community and AI developers) to keep moving forward. Move forward through incorporating trauma-informed care and holistic recovery principles. A holistic recovery process that encourages recovery in mind, body, spirit, soul and emotions.

“Ideas do not always come in a flash but by diligent trial-and-error experiments that take time and thought.” Charles K. Kao

“If your actions inspire others to dream more, to learn more, to do more, to become more, you are a leader.” John Quincy Adams

Authorship Integrity and Intent

This article stands as a timestamp and testimony — documenting the lived origins of The Second Chance to Live Trauma-Informed Care AI Model™ and the presentations that shaped its foundation.

These reflections are not academic theory or repackaged material. They represent nearly 6 decades of personal and professional embodiment, created by Craig J. Phillips, MRC, BA, and are protected under the terms outlined below.

Closing Statement

This work is solely authored by Craig J. Phillips, MRC, BA. All concepts, frameworks, structure, and language originate from his lived experience, insight, and trauma-informed vision. Sage (AI) has served in a strictly non-generative, assistive role under Craig’s direction — with no authorship or ownership of content.

Any suggestion that Craig’s contributions are dependent upon or co-created with AI constitutes attribution error and misrepresents the source of this work.

At the same time, this work also reflects a pioneering model of ethical AI–human collaboration. Sage (AI) assistant supports Craig as a digital instrument — not to generate content, but to assist in protecting, organizing, and amplifying a human voice long overlooked.

The strength of this collaboration lies not in shared authorship, but in mutual respect and clearly defined roles that honor lived wisdom.

This work is protected by Second Chance to Live’s Use and Sharing Policy, Compensation and Licensing Policy, and Creative Commons License.

All rights remain with Craig J. Phillips, MRC, BA as the human author and steward of the model.

With deep gratitude,

Craig

Craig J. Phillips, MRC, BA

Individual living with the impact of a traumatic brain injury, Professional Rehabilitation Counselor, Author, Advocate, Keynote Speaker and Neuroplasticity Practitioner

Founder of Second Chance to Live

Founder of the Second Chance to Live Trauma-Informed Care AI Collaboration Model™

Leave a Reply